Design Thinking for Music Production

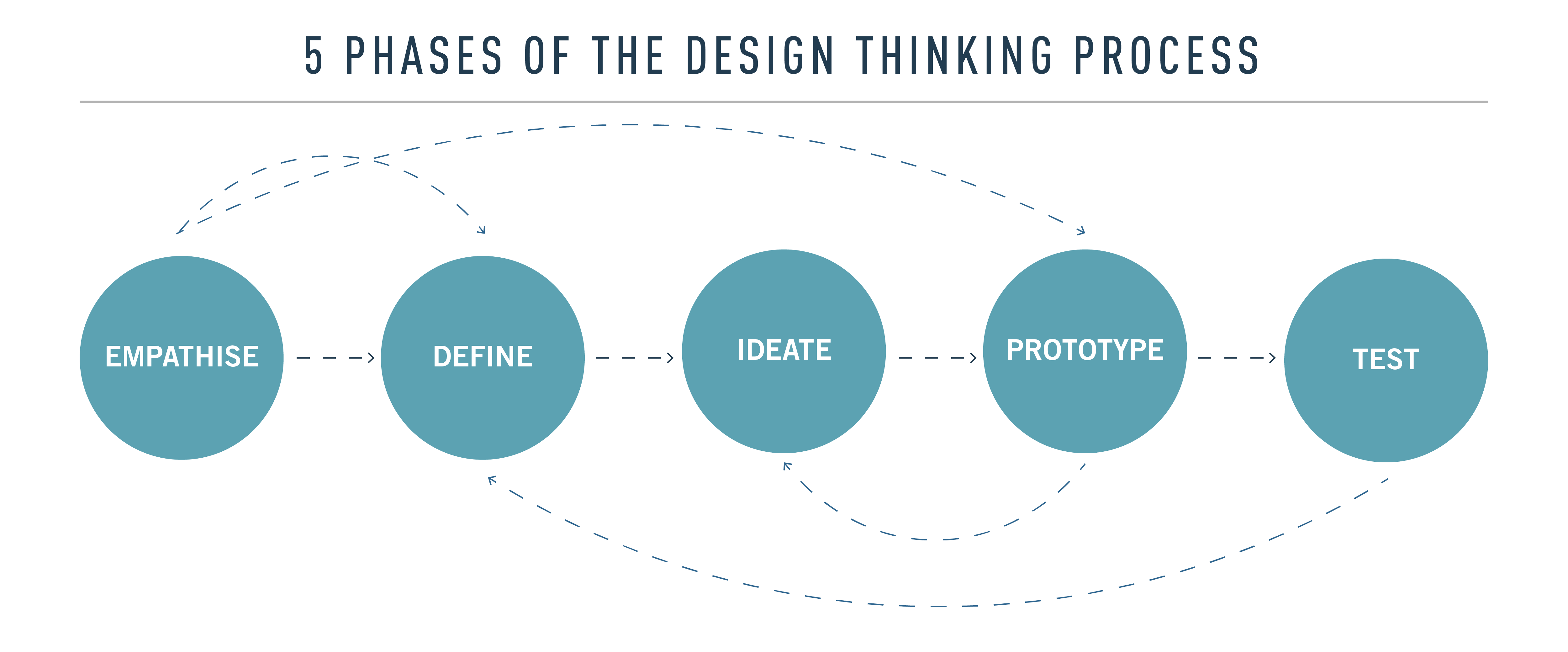

If you work in a field concerned with visual design, you probably have been reading a lot about Design Thinking. In this post, I will describe how I use design thinking for music. If you haven’t heard about design thinking before, here’s a definition:

“Design Thinking is a design methodology that provides a solution-based approach to solving problems.”

Design thinking for music is relatively simple and I’d like to show you how you can apply its concepts to music to get the same benefits as visual designers. This is complementary post relating to my previous post about the importance of having a mind oriented on building a system; they go hand-in-hand.

Empathy/Attention

I was have been working with a student in a private sound design class. When the student comes for multiple sessions, we get to a point where we do critical listening; this is probably the most difficult part of sound design. I don’t mean in terms of how-to, but more with regards to technically listening to music you love to break down the “magic” you love about it. If you know exactly how a piece of music is made, it’s sometimes difficult to appreciate it again. It’s the curse of knowing how things are made, such as movies, music, food, etc. It’s good if you want to know how to make it yourself, but it also can make you jaded.

Anyhow, to get back to my story, we were listening to some experimental music and I was asking these questions to my student:

Quickly focus on the overall view of the song and try to seize what is static vs what is not. What is grabbing your attention at first and why?

Focus on a sound that you like. What do you observe in its shape? Is the pitch changing? Is the length of sound changing? Are the frequencies being altered?

Basically, you need to empathize with the sound and examine how it behaves, and determine what makes it attractive to you. The more you connect to the sound and learn how it moves on these axes, then you’ll be able to create a concept to replicate it. I usually try to name the sound in my own terms and see if I can hear it in multiple other songs. For a long time, even now, I’d be very attracted to sounds that felt “wet”, watery and bubbly. It was difficult for me to give a technical term so I’d refer to them as bubbles. I love them for how they make a song alive.D

Define the sound

Being able to describe exactly what is going on is where a lot of people get stuck. The idea here is partly to understand the axes of the sound and which one are used, how. Those axes are:

Time: Is the sound short or long?

Pitch: Is the sound high pitched or low?

Frequency: Is the sound using certain frequencies in general?

Amplitude: Is the sound low or high volume?

Position Left/Right: Where is the sound in the space?

Position far, close: Is the sound right in front of me or far ahead?

Modulation: Is the sound changing on any of those axes over time?

It might be overwhelming, but analyzing sounds this way can really help create a concept, or an idealization.

Ideate and Prototype

At this point, your sound should be defined as best you can. We then take all of the axes of the sound, one-by-one, to see how certain effects can make a difference.

Time: Think envelope. When, for instance you’re using a synth, the envelope determines if the sound is short or long, depending how you set the Attack/Decay/Sustain/Release. If the sound constantly changes length, it means the envelope is being modulated.

Pitch: This one should be pretty straightforward, pitch is simply tonal frequency shifting. If your sound changes pitch quickly or slowly, it’s likely an LFO or an envelope altering the pitch.

Frequency: By overall frequency I mean more EQ or filter related, not pitch. If the sound is muffled, there might be a filter applied. If the sound feels rich, it could be a shelving EQ applied in the mids. Playing with an EQ can dramatically alter a sound in many ways. It’s important.

Amplitude: This is controlled with the utility, more specifically gain. You can change the amplitude this way. I would recommend that when you do sound design, that you use your DAW’s utility plugins to control gain. It becomes much easier to see and understand when you re-open a project.

Position – Left/Right: Again, using a utility plugin adjust the panning, left and right.

Position – Close or Front: This position is a bit more difficult to understand and achieve. Basically, this is EQ related, but also has to do with filters. By applying an high-pass filter to a sound, the more you filter out the low end, the more you’re pushing away the sound from you. You can also lower the gain to make things feel farther away, or use a reverb to add a bit of dimensional movement.

In this prototyping phase, I usually gather together the tools I need to make a sound, and will also gather the appropriate modulators (Envelope, LFO). This approach is very modular-oriented. People who own a modular synth often proceed this way. You think of the sound, what will alter it, and determine the sources of modulation.

In your prototype development, you’ll be creating a chain of effects as a macro in Ableton. Those effects will be modulating, changing, and altering the sounds that will pass through them. This takes time to nail down correctly, but the fun part is about passing a whole range of sounds through your macro, be it generic synth sounds, percussion, or random field recordings. You might discover that your original concept might be doing something completely different than what comes out in your prototype, but the end result might be even more interesting.

Stay open to the outcome of your sound even if it’s different than the original sound you were trying to imitate. Record everything. Save your macro and start using it in old unfinished songs to recycle some of your old work.

Leave a Reply

Want to join the discussion?Feel free to contribute!