Using Modular Can Change the Way You View Music Production

Are “sound design” and “sequencing” mutually exclusive concepts? Do you always do one before you do the other? What about composition—how does that fit in? Are all of these concepts fixed, or do they bend and flex and bleed into one another?

The answers to these questions might depend on the specific workflows, techniques, and equipment you use.

Take, for example, an arpeggiator in a synth patch. There are two layers of sequencing to produce an arpeggio: the first layer is a sustained chord, the second layer is the arpeggiator. Make the arpeggiator run extremely fast, in terms of audio rate, and we no longer have an audible sequence made up of a number of discrete notes, but a complex waveform with a single fundamental. Just like that, sequencing has become sound design.

These two practices—sequencing and sound design—are more ambiguous than they seem.

Perhaps we only see them as distinct from each other because of the workflows that we’re funneled towards by the technologies we use. Most of the machines and software we use to make electronic music reflect the designer’s expectations about how we work: sound design is what we are doing when we fill up the banks of patch slots on our synths; sequencing is what we do when we fill up the banks of pattern slots on our sequencers.

The ubiquity of MIDI also promotes the view of sequencing as an activity that has no connection to sound design. Because MIDI cannot be heard directly, and only deals with pitch, note length, and velocity, we tend to think that that’s all sequencing is. But in a CV and Gate environment, sequencers can do more than sequence notes—they can sequence any number of events, from filter cutoff adjustments to clock speed or the parameters of other sequencers.

Modular can change the way you see organized sound

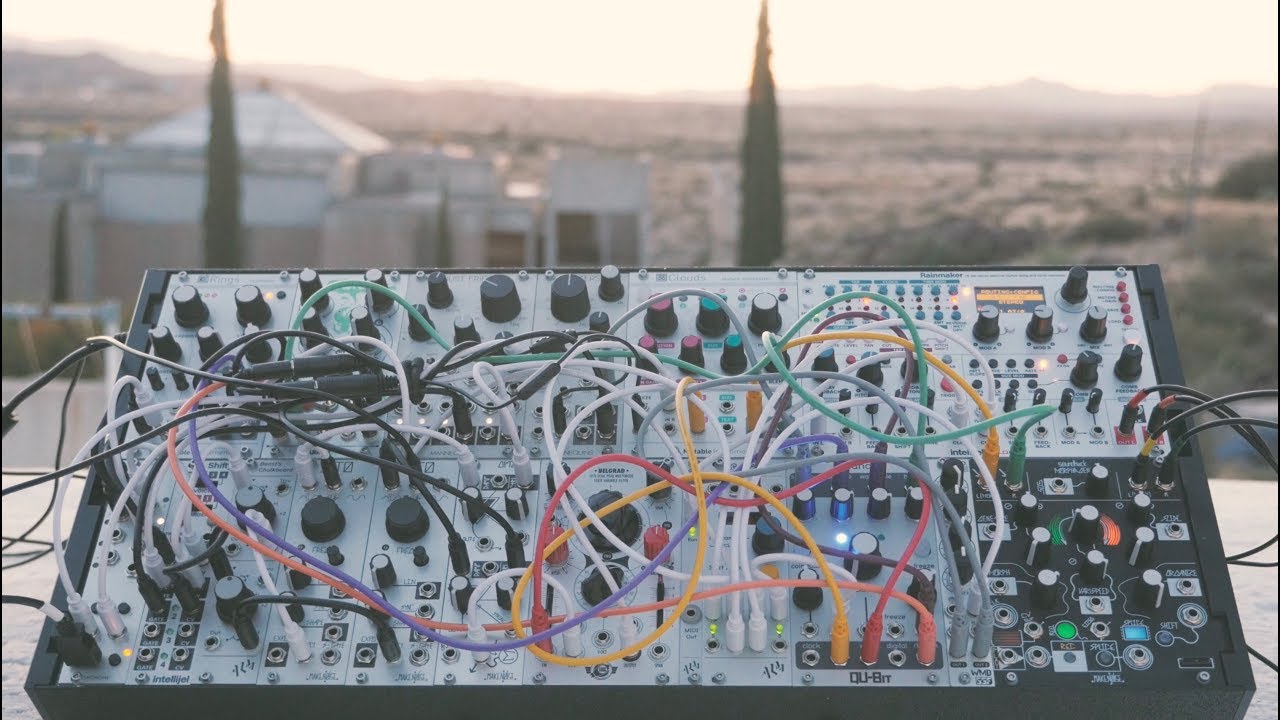

Spend some time exploring a modular synthesizer and these sharply distinct concepts quickly start to break down and blur together.

Most people don’t appreciate how fundamentally, conceptually different CV and gate is to MIDI. MIDI is a language, which has been designed to according to certain preconceptions (the tempered scale being the most obvious one). CV and gate, on the other hand, are the same stuff that audio is made of…voltage, acting directly upon circuits with no layer of interpretation in between. Thus, a square wave is not only an LFO when slowed down, or a tone when sped up, but it is also a gate.

What that square wave is depends entirely on how you are using it.

You can say the same thing about most modules. They are what you use them for.

To go back to our original example: a sequencer can be clocked at a rate that produces a distinct note, and that clock’s speed can itself be modulated by an LFO, so the voice that the sequencer is triggering goes from a discrete note sequence, to a complex waveform tone, and back again. The sound itself goes from sequence to sound effect and back to sequence…

Do you find this way of looking at music-making productive and enjoyable, or do you prefer to stick to your well-trodden workflows? Does abandoning the sound design – sequencing – composition paradigm sound like a refreshing, freeing change to you? Or does it sound like a recipe for never finishing another track ever?

SEE ALSO : “How do I get started with modular?”

Leave a Reply

Want to join the discussion?Feel free to contribute!